Empirical Education Appoints Chief Scientist

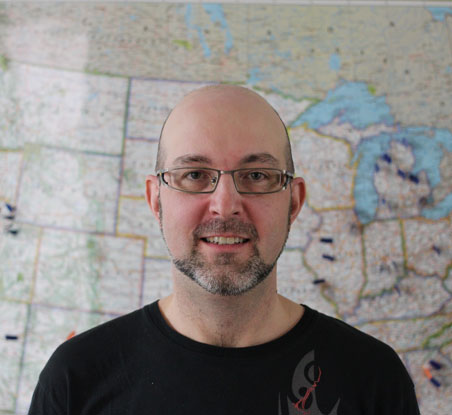

We are pleased to announce the appointment of Andrew Jaciw, Ph.D. as Empirical Education’s Chief Scientist. Since joining the company more than five years ago, Dr. Jaciw has guided and shaped our analytical and research design practices, infusing our experimental methodologies with the intellectual traditions of both Cronbach and Campbell. As Chief Scientist, he will continue to lead Empirical’s team of scientists setting direction for our MeasureResults evaluation and analysis processes, as well as basic research into widely applicable methodologies. Andrew received his Ph.D in Education from Stanford University.